Sustainability monitoring in cloud computing is becoming increasingly important, especially as cloud infrastructures grow in size and complexity. At OSMC 2025, Josephine Kipke presented a practical and well-grounded talk on how environmental impacts can be measured in digitally sovereign cloud environments. Based on her work at the Open Source Business Alliance and the EcoDigit research project, she showed how sustainability metrics can be integrated into real production systems without losing sight of operational realities.

Digital Sovereignty and the Sovereign Cloud Stack

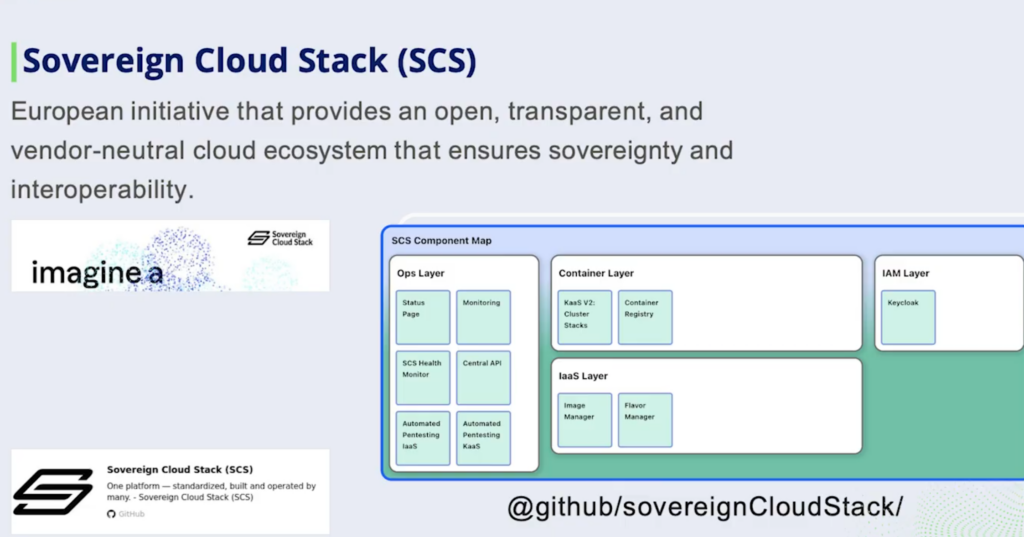

Josefine began by introducing the work of the Open Source Business Alliance, which plays an important role in promoting open source and digital sovereignty in Germany. One of its flagship initiatives is the Sovereign Cloud Stack (SCS). The goal of SCS is to create an open, interoperable, and vendor-neutral cloud ecosystem for Europe.

She broke SCS down into three core ideas. The first is certifiable standards, which help avoid vendor lock-in and make interoperability a real, testable property rather than a promise. The second is reference implementations that show how these standards can be applied in practice. The third is open operations, meaning that providers share documentation, operational experience, and lessons learned instead of treating them as competitive secrets.

Why Sustainability Monitoring in Cloud Computing is Challenging

A large part of the talk focused on why sustainability metrics are so difficult to get right in real-world clouds. In practice, cloud infrastructures are anything but uniform. Providers use different hardware, expose energy data in different ways, and run very different monitoring stacks.

On top of that, deployment models and lifecycle management vary widely, and even basic information – such as power consumption per device – is often missing or incomplete. All of this makes it difficult to compare results between environments or to trust sustainability metrics without a lot of caveats.

From Estimation Models to Runtime Validation

Within the EcoDigit project, the team initially worked with energy profiles, which estimate energy consumption based on system utilization. This approach quickly runs into a fundamental problem – if you can’t validate those estimates at runtime, how confident can you really be in the numbers?

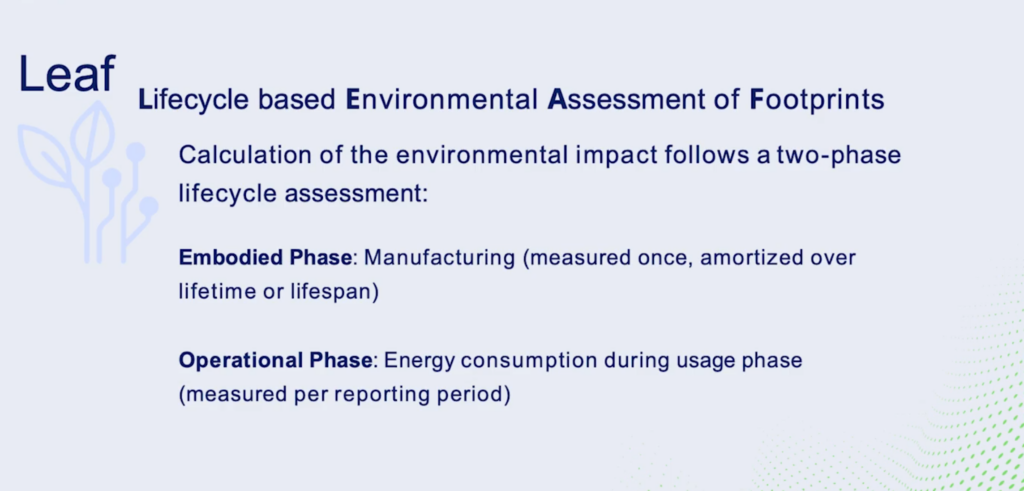

This question led the project toward combining estimation models with live monitoring data. The key distinction here is between embodied emissions (which come from manufacturing and transporting the hardware) and operational emissions (which result from actually running the systems). Embodied emissions can be spread over a device’s lifetime, but operational emissions need to be measured continuously if they are meant to inform real decisions.

The Architecture Blueprint and LEAF

Instead of jumping straight into implementation, the team first defined a technology-agnostic architecture blueprint. This blueprint describes which data sources are required, how data should be processed, and how energy consumption can be attributed to both providers and tenants.

Based on this blueprint, they built a proof of concept called LEAF – Lifecycle-based Environmental Assessment of Footprints. LEAF works in two phases: a static phase for embodied emissions and a dynamic phase for operational emissions.

What convinced me most here was the decision not to replace existing monitoring setups. LEAF integrates with tools like Prometheus and includes fallback mechanisms when certain metrics aren’t available. That makes it much more realistic to deploy in heterogeneous environments, rather than only in idealized setups.

From Infrastructure Metrics to Reporting

Another interesting part of the talk dealt with fair attribution. In OpenStack-based clouds, both the control plane and the data plane consume energy, and that consumption has to be distributed across tenants in a way that makes sense.

Josefine discussed different approaches, such as allocating energy based on virtual resources like vCPUs and memory, or using actual energy consumption data where available. Tools like Kepler play a key role here, since they already provide energy measurements for containers, virtual machines, and nodes. During the Q&A, it became clear that building on established tools like Kepler is a conscious decision – not everything needs to be reinvented.

Standardization and Community Collaboration

Beyond the technical details, the talk strongly emphasized the importance of standardization. A standardized API for sustainability metrics would allow both providers and tenants to access comparable data and integrate it into their own systems.

One nice example of community collaboration was the integration with KaaKA, an open source Kubernetes scheduler. Sustainability scores calculated by LEAF can be used as an input for scheduling decisions, allowing workloads to be placed on more energy-efficient cloud infrastructures.

My Personal Takeaway

The emphasis on openness and standardization really stood out to me. Making sustainability data visible to tenants feels like an important step toward transparency and accountability in cloud computing. Just as importantly, the collaboration across multiple open source projects showed that tackling sustainability at this level is much more feasible when it’s done as a community effort.

Overall, this was a talk that didn’t just explain a difficult problem – it outlined a realistic path toward making cloud infrastructures genuinely more sustainable.

OSMC 2026 – Join us!

This year’s Open Source Monitoring Conference is happening from Nov 17 – 19 in Nuremberg. Join us as we celebrate a very special 20th anniversary edition! Stay tuned and get your Early Bird ticket!

0 Kommentare